|

Christos Sevastopoulos Since July 2025, I have been working as a Machine Learning Engineer at Liebherr Group, where I focus on developing predictive models for industrial applications. Prior to this, I was a postdoctoral researcher at the University of Illinois Chicago, in the Richard and Loan Hill Department of Biomedical Engineering. I completed my PhD in Computer Engineering at the University of Texas at Arlington, advised by Fillia Makedon. Earlier in my academic path, I earned a Master's in Robotics from the University of Bristol, UK and a Bachelor's degree in Physics from the National & Kapodistrian University of Athens. I have also held research positions at NCSR Demokritos, supervised by Stasinos Konstantopoulos, and interned as a Machine Learning Engineer at GN Group. My work lies at the intersection of Deep Learning, Computer Vision, and Robotics, aiming to build intelligent systems that support real-world decision-making. I'm especially interested in Transformer-based architectures like BERT and their integration with multimodal data, enabling machines to reason across vision, language, and sensor inputs. My research has spanned topics such as scene understanding, image reconstruction, segmentation, and enhancement, leveraging Generative AI methods including diffusion models and GANs, as well as object detection frameworks like YOLO and Faster R-CNN. I also explore how controlled noise—from adversarial attacks to diffusion processes—can be harnessed to enhance model robustness and image fidelity. A key theme in my work is reconciling human-like reasoning with the structured logic of machine learning, bridging the gap to build AI systems that perform reliably in complex environments. For a more comprehensive list of my projects, check my GitHub and my Google Scholar. Some Publications

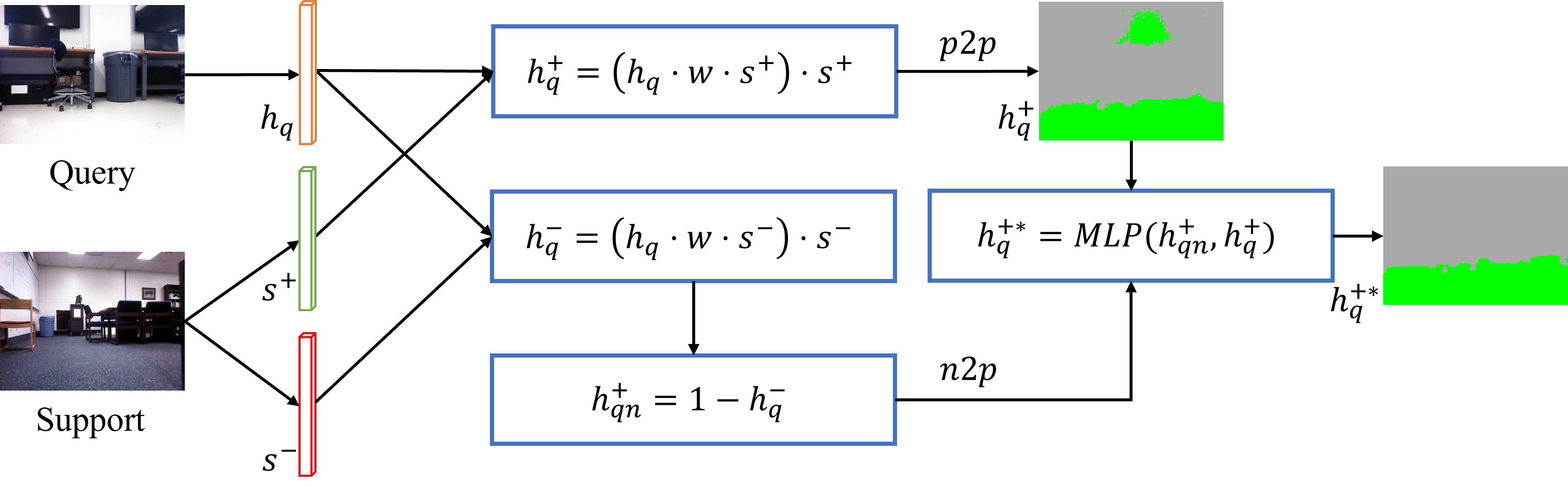

Few-shot Traversability Segmentation of Indoor Robotic Navigation with Contrastive Logits AlignAuthors: Qiyuan An, Christos Sevastopoulos, Farnaz Farahanipad, Fillia Makedon, 2024 IEEE 20th International Conference on Automation Science and Engineering (CASE) This paper proposes a Few-Shot Learning (FSL) approach to meta-learn an existing pretrained segmentation model for an indoor traversability segmentation task. The goal is to meta-learn a segmentation model in a sense that it can adapt to a new unseen class given limited annotated examples.

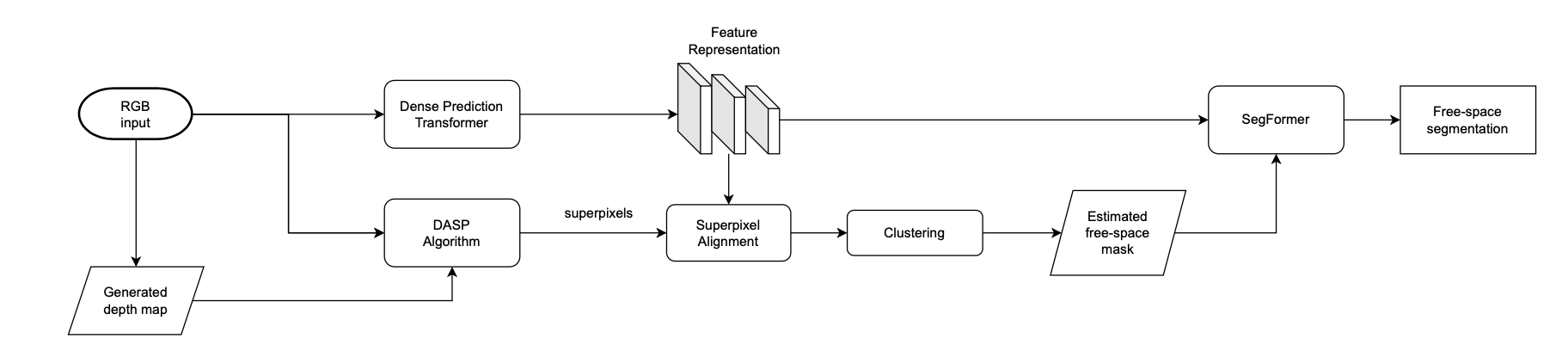

Learning Indoors Free-space Segmentation for a Mobile Robot from Positive InstancesAuthors: Christos Sevastopoulos, Joey Hussain, Qiyuan An, Stasinos Konstantopoulos, Vangelis Karkaletsis, Fillia Makedon, 2023 Seventh IEEE International Conference on Robotic Computing (IRC) This paper proposes an indoors free-space segmentation method that associates large depth values with navigable regions. It also employs an unsupervised masking technique that, using positive instances, generates segmentation labels based on textural homogeneity and depth uniformity.

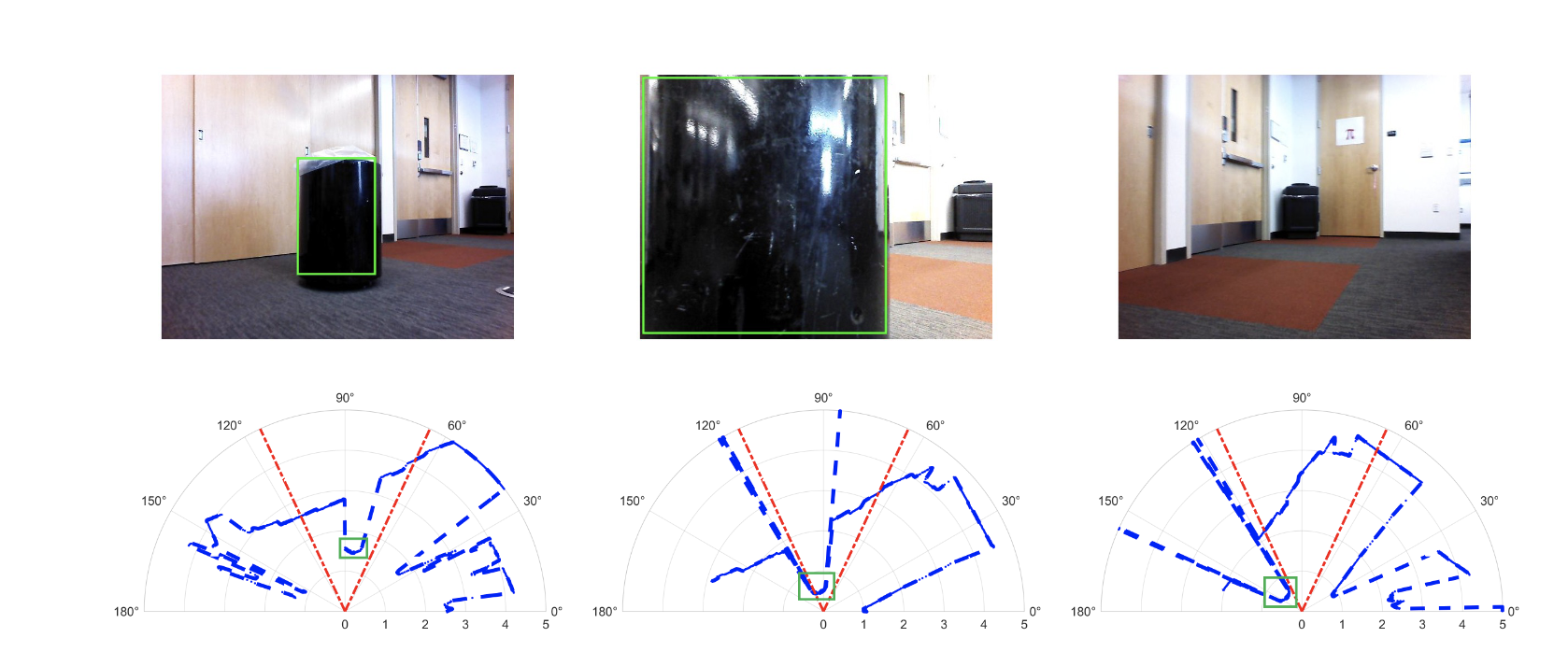

Indoors Traversability Estimation with RGB-Laser FusionAuthors: Christos Sevastopoulos, Michail Theofanidis, Aref Hebri, Stasinos Konstantopoulos, Vangelis Karkaletsis, Fillia Makedon, 2023 IEEE 19th International Conference on Automation Science and Engineering (CASE) This paper proposes a dual-stream, semi-supervised, attention-based approach that employs feature fusion of RGB and Laser Range Finder (LRF) modalities. Our method leverages the strength of two powerful transformer-based networks, ViT and SegFormer, along with Laser information, to adequately predict whether the scene encountered in the image is safe for a robot to traverse.

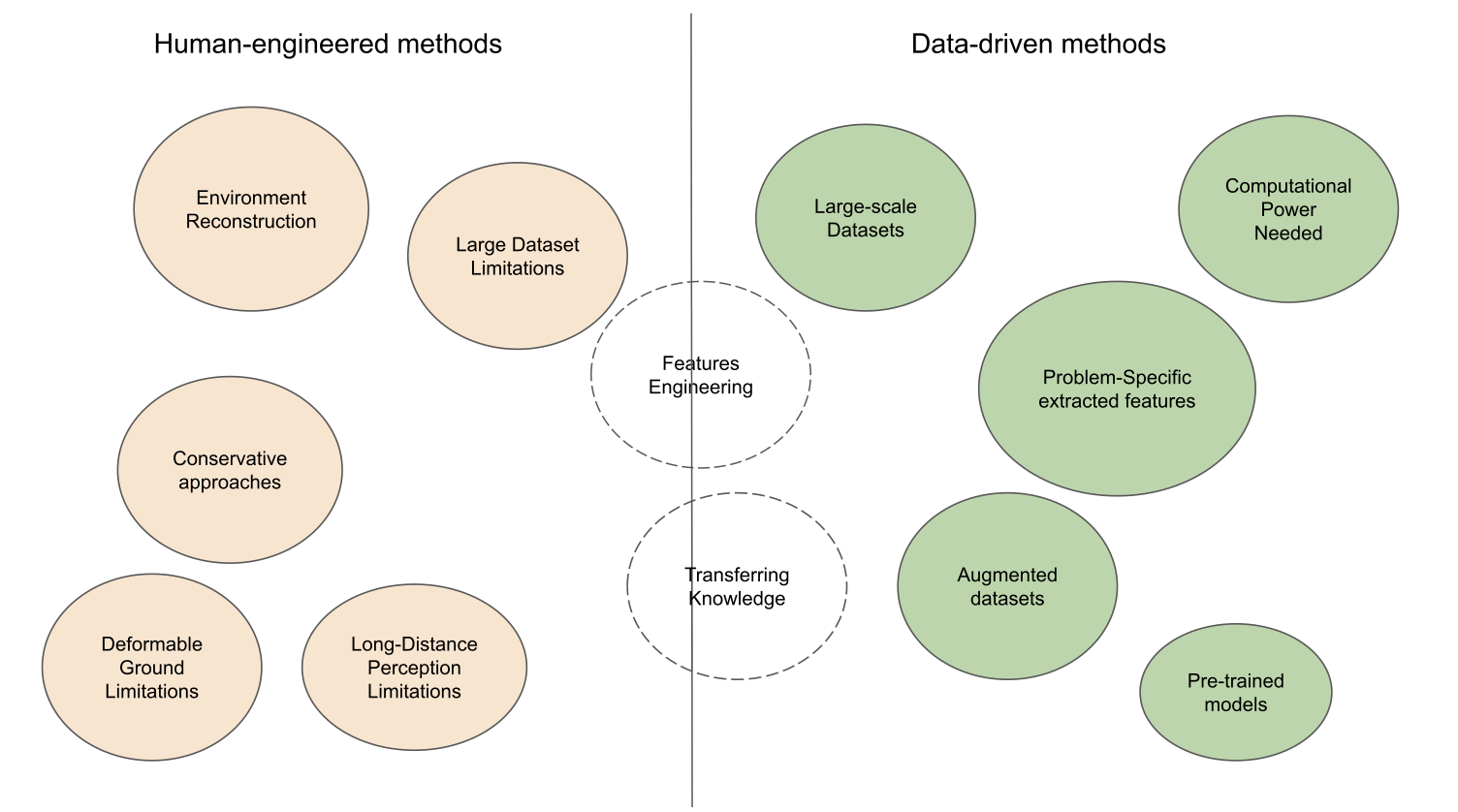

A Survey of Traversability Estimation for Mobile RobotsAuthors: Christos Sevastopoulos, Stasinos Konstantopoulos, IEEE ACCESS This work highlights the merits and limitations of all the major steps in the evolution of traversability estimation techniques, covering both non-trainable and machine-learning methods, leading up to how the nascence of Deep Learning has created an opportunity for radical improvement in traversability estimation. Skills

|